What if your AI could think like your company without ever disclosing your data to third parties? Companies are quickly moving toward private large language models for precisely this reason. It is no longer sufficient to rely just on public AI technologies in an era where data breaches and compliance issues are increasing.

This LLM development guide is your comprehensive road map for creating safe, effective AI systems that are customised for your company. Whether you’re planning to build private LLM solutions or exploring enterprise LLM development, this guide simplifies every step. You’ll learn how private large language models can give your business a powerful competitive edge, safely and efficiently.

What is a Private LLM? (Foundation of This LLM Development Guide)

An AI system that is created, trained, and implemented in a controlled setting is known as a private large language model. It guarantees that your company’s data never leaves your infrastructure, compared to public AI technologies.

It is essential to comprehend this idea in this LLM growth guide. Because these models are made exclusively for internal use, businesses can enhance accuracy and customise results.

Important distinctions consist of:

- Data control: Private models protect private information

- Customisation: Adapted to use cases unique to a business

- Compliance: Easily satisfies legal requirements

More businesses are using private large language models to manage activities like internal automation, analytics, and consumer interactions as custom LLM development expands. To put it simply, using an LLM development guide helps companies in building strong and safe AI systems.

Why Enterprises Need Private LLMs

Today’s businesses handle enormous volumes of sensitive data. Private LLMs are a better option because public AI technologies frequently generate issues with data sharing.

The following explains why businesses are making significant investments in enterprise LLM development:

- Data Privacy: Your system retains sensitive data.

- Regulatory Compliance: Complying with GDPR, HIPAA, and other regulations is simpler.

- Improved Accuracy: Internal data-trained models perform better.

- Scalability: Systems expand to meet your company’s demands.

Furthermore, by managing the behaviour of their AI, businesses that develop private LLM solutions obtain a competitive edge. Adopting private LLM is now strategically important as industries change. For this reason, before implementation, every business should adhere to a systematic LLM development guide.

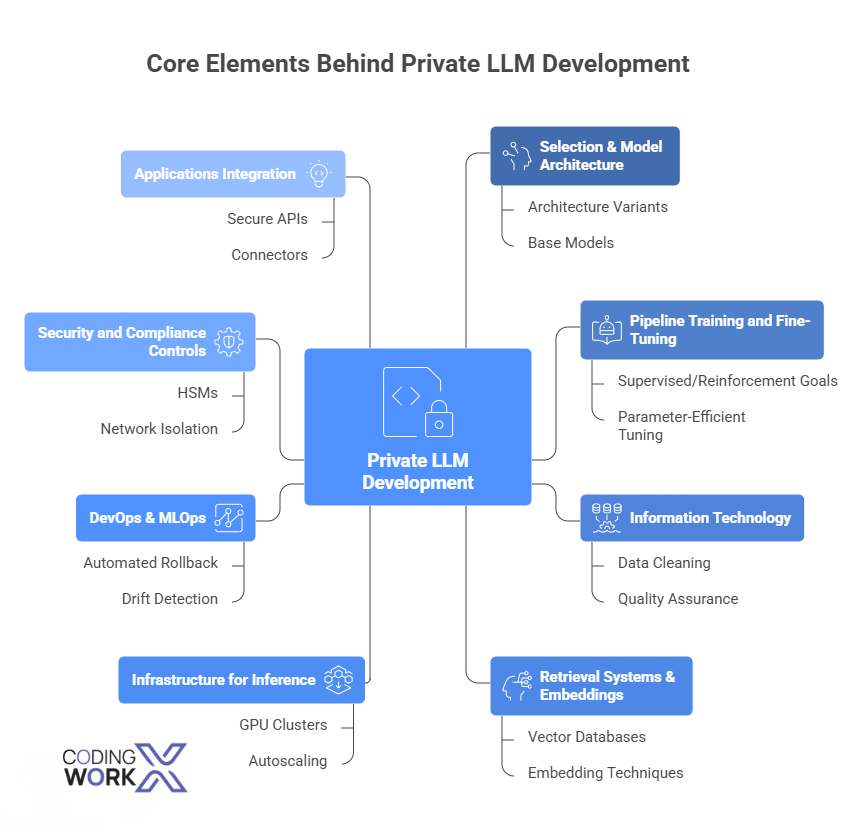

Key Elements of Private LLM Development

Many organisational and technical elements must be coordinated to develop private LLMs. The fundamental pillars that we must create and run are listed below.

1. Selection & Model Architecture

Select an architecture variant (decoder-only vs. encoder-decoder, multi-modal extensions) and a base model — licensed proprietary models or open-source models like LLaMA, Falcon, and Mistral on Hugging Face. Analyse cost, latency, and size trade-offs.

2. Pipeline Training and Fine-Tuning

Establish repeatable pipelines for supervised/reinforcement goals, parameter-efficient tuning (LoRA, adapters), and fine-tuning. Uphold strict version control for checkpoints and weights.

3. Information Technology

Clean, label, and curate training datasets. Implement quality assurance, data deduplication, and ETL procedures. Create pipelines to generate retrieval corpora and embeddings.

4. Retrieval Systems & Embeddings

Create reliable vector databases and embedding techniques for retrieval-augmented generation (RAG). Retrieval accuracy is directly impacted by embedding quality.

5. Infrastructure for Inference

For production workloads, provide GPU clusters, autoscaling, and inference optimisations (quantisation, batching). SLAs and monitoring for design delay.

6. DevOps & MLOps

Automate rollback procedures, canary deployments, drift and regression detection tests, and CI/CD for models. Monitor metadata and model ancestry.

7. Security and Compliance Controls

Use HSMs for key management, network isolation, RBAC, logging, and encryption both in transit and at rest. Verify adherence to relevant standards.

8. Applications Integration

To include LLM capabilities in chatbots, search, knowledge workbenches, and business processes, make secure APIs and connectors available. Our AI agent development capabilities make this integration seamless and production-ready.

A strong private LLM program coordinates these elements with clean governance and team ownership.

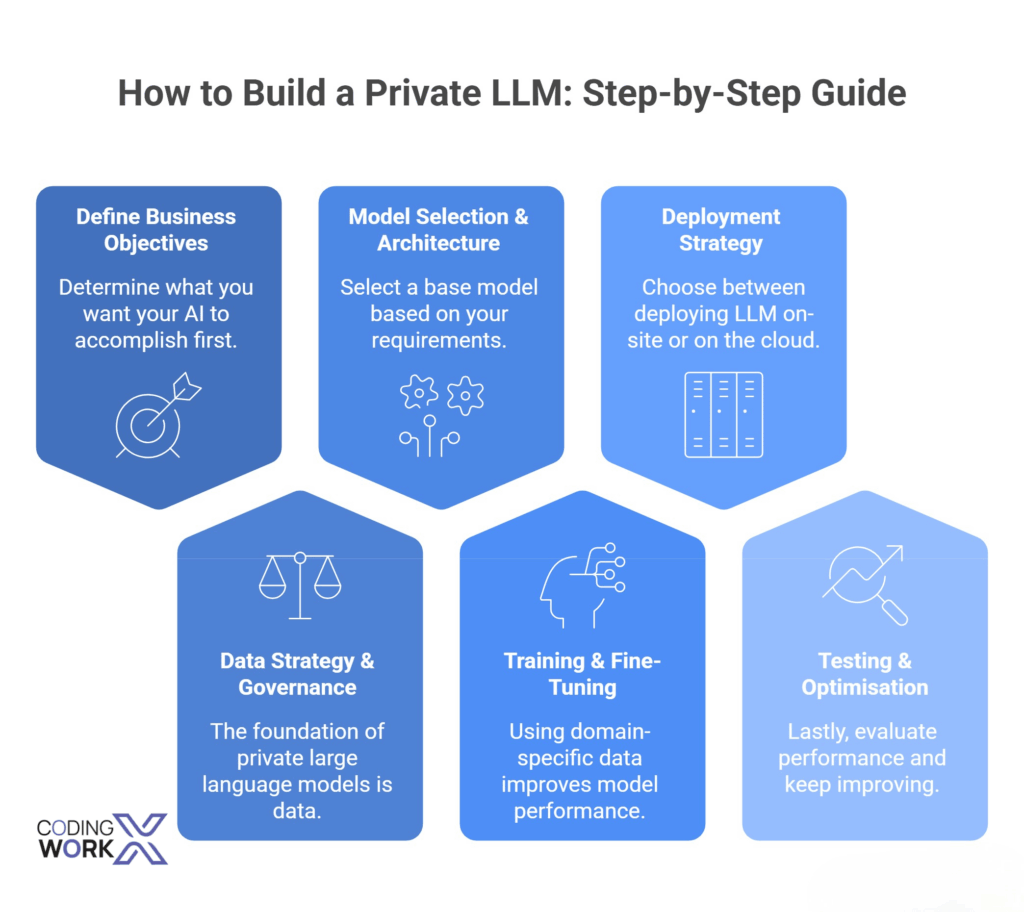

Step-by-Step LLM Development Guide to Build Private LLM

Step 1: Define Business Objectives

Determine what you want your AI to accomplish first. Clear objectives help align technology and business outcomes in enterprise LLM development. This guide advises mapping use cases such as automation, analytics, or customer support.

Step 2: Data Strategy & Governance

The foundation of private large language models is data. Effective data collection, cleaning, and governance are essential. Compliance and accuracy should be the top priorities for companies developing private LLM systems at this point.

Step 3: Model Selection & Architecture

Select a base model based on your requirements. While some businesses choose custom LLM development for more control, others look into open-source foundations. Any LLM development guide must include this crucial phase.

Step 4: Training & Fine-Tuning

Using domain-specific data improves model performance during fine-tuning. This stage makes sure the AI is aware of your business environment.

Step 5: Deployment Strategy

Choose between deploying LLM on-site or on the cloud. For increased protection when working with private large language models, many businesses favour on-premises configurations. Our team of generative AI development experts can advise on the optimal deployment architecture for your compliance requirements.

Step 6: Testing & Optimisation

Lastly, evaluate performance and keep improving. Monitoring and improvement are constantly emphasised in a trustworthy LLM development guide.

Infrastructure Needs Planning for Private LLMs

Strong infrastructure planning is necessary to guarantee performance, dependability, and complete cost management.

1. Hardware and Compute

For production LLM workloads, GPU selection matters enormously. See the NVIDIA Developer Blog for GPU infrastructure best practices for LLM inference including benchmarks for A100/H100 configurations, PCIe bandwidth, and NVLink scaling.

- GPU selection: Select GPU kinds (e.g., NVIDIA A100/H100, alternatives when supported) according to model size and throughput. Think about PCIe bandwidth, memory, and NVLink.

- Accelerators: If your model stack supports them, consider TPUs or other hardware.

- Storage: NVMe and parallel file systems provide high-throughput, low-latency storage.

- Networking: To scale distributed training, use InfiniBand, a low-latency link.

2. Sizing and Capacity

Training cycles, fine-tuning runs, and production inference QPS (queries per second) are examples of forecasted workloads.

Make plans for burst capacity and peak demand (e.g., arrange spare capacity or private cloud burst).

3. Facilities & Energy

Running costs and sustainability are impacted by power and cooling architecture, particularly for on-premises clusters. If facilities are limited, think about co-location or a managed private cloud.

4. Orchestration and Software

For GPU scheduling, use containerised stacks (Kubernetes) with orchestration (KubeFlow, Kubeflow Pipelines, or other MLOps platforms).

Implement model serving frameworks that facilitate autoscaling, quantisation, and batching. Our cloud and DevOps services team specialises in building these pipelines.

5. Safety and Separation

Physical access restrictions for on-premises hardware, network segmentation, and HSMs for key management. Put signed images and secure boot into practice.

6. Telemetry and Observability

Track drift, error rates, delay, and GPU utilisation. Set up alerts and dashboards for proactive operations.

Effective infrastructure planning guarantees consistent performance for mission-critical services and matches capacity to business demand.

Deployment Comparison Table

| Deployment Type | Pros | Cons |

| Cloud | Scalable, flexible | Data security concerns |

| On-premise | High security, control | Expensive setup |

| Hybrid | Balanced approach | Complex management |

Infrastructure choices have a direct impact on performance and cost in enterprise LLM development. This LLM development guide guarantees that you select the best configuration for your requirements.

Challenges in Private LLM Development (and Solutions)

Private LLMs have drawbacks in addition to their many advantages. You can successfully explore them with the use of this LLM development guide.

Common Challenges:

- High computational expenses

- Insufficiently qualified experts

- Problems with data quality

- Security risks

Solutions:

- Start small and work your way up

- Invest in knowledgeable personnel or collaborators such as Codingworkx

- Make use of tidy, organised datasets

- Put robust security measures in place

Overcoming these obstacles is essential to the success of enterprise LLM development. Companies can reap long-term rewards by deliberately developing private LLM systems.

Use Cases of Private LLMs in Enterprises

Practical applications across businesses are highlighted in this LLM development guide.

Common Use Cases:

- Automated Customer Service: enterprise AI chatbot solutions powered by private LLMs deliver faster, data-safe responses.

- Internal data retrieval systems for knowledge management

- Processing Documents: Automating summaries and reports

- Industry Solutions: AI tools for finance, healthcare, and law

Businesses that invest in enterprise LLM development receive cost savings and increased productivity. Businesses have complete control over AI-driven processes when they develop proprietary LLM solutions.

Best Practices for Enterprise LLM Development

Businesses need to use tried-and-true tactics to prosper. The best practices for successful implementation are described in this LLM development guide.

1. Start with Valuable Pilots

Select use cases that have well-defined success measures (e.g., time saved, mistake reduction, NPS enhancement). To verify ROI, conduct a pilot in a controlled setting.

2. Implement a Hybrid Deployment Strategy

For speed, use a private cloud pilot; for delicate production workloads, move to an on-premise environment. This allows for quick learning without compromising adherence.

3. Establish an MLOps Base

Invest in model registries, reproducible training pipelines, automated safety and bias checks, and CI/CD for models.

4. Use Lineage and Data Hygiene

Consider data as a premium product. Before training, implement quality gates, record provenance, and version datasets.

Consistent optimisation is necessary for private large language models. Adhering to best practices in business LLM development guarantees ROI and long-term success.

5. Enforce Approvals and Governance

Make use of a methodology for classifying risks and demand governance approvals for use cases that pose a high risk. When feasible, automate the enforcement of policies.

6. Make Cost and Efficiency Improvements

Use distillation, quantisation, and parameter-efficient tuning (LoRA) to achieve less resource usage. Infrastructure should be reused between projects.

7. Consider Observability When Designing

Keep an eye on the following important metrics: aberrant outputs, drift metrics, latency, error rates, and user satisfaction. Workflows for incidents should incorporate monitoring.

8. Make Cross-Functional Team Investments

To preserve compliance and expedite approvals without impeding delivery, pair data scientists with product, security, and legal management.

9. Make a Model Lifetime Plan

Establish timetables for post-deployment evaluations, model retirement, and retraining.

10. Find the Right Partner

While maintaining strategic control over data and models, collaborate with vendors or system integrators to launch the program if internal capability is lacking.

By using these procedures, operational risk is decreased and time to value is shortened.

Challenges and Considerations

Businesses encounter many significant obstacles when putting private LLMs into practice, notwithstanding their great value. These factors should be considered beforehand to ensure the project’s success.

1. High Requirements for Computing

Strong GPUs, complex architecture, and storage systems are all necessary for the training and implementation of language models. A business must realise that implementing LLM is a full-fledged infrastructure undertaking that necessitates either investing in its own servers or using a load-optimised cloud, rather than just a straightforward model load.

2. Risks in Law and Ethics

The use of AI in business is becoming more and more governed by legislation. It is crucial to consider compliance with regulations like GDPR, HIPAA, and PCI DSS while handling financial, medical, or personal data.

Reputational concerns should also be taken into account; the model should be built to prevent producing responses that are biased, deceptive, or malevolent. Restrictions, filters, and unambiguous control over the data used to train the AI solve these problems.

3. Interpretability and Quality of Results

Even a well-trained model is prone to error, particularly in novel or uncommon circumstances. Making sure that its results can be explained, that its responses can be verified, and that it informs the user of its limitations are the main challenges. In the absence of this, the LLM could produce false or erroneous data while creating the appearance of certainty.

In Conclusion

Private LLM is redefining the use of AI in the business model. Organisations may create safe, scalable, and effective AI systems by adhering to a disciplined LLM development guide.

Investing in enterprise LLM development is a wise choice, regardless of how big your company is. Building private LLM solutions that offer you complete control, improved performance, and long-term value is now possible.

FAQs

1. How much time does it take to construct a private LLM?

A RAG-based pilot using an existing open-source model typically takes 6-12 weeks from planning to deployment. 4-8 weeks are added for domain-specific data fine-tuning. It takes 6-18 months and significant GPU resources to fully customise pre-training from scratch. For speed, most businesses begin with RAG and then add fine-tuning for accuracy.

2. What is the price of constructing a private LLM?

The cost of developing a private LLM varies depending on infrastructure, data amount, and complexity. Depending on scalability and customisation requirements, enterprise LLM development can range from modest expenses to significant investments.

3. Is public AI better than private LLM?

Private large language models are better for enterprises needing data security, compliance, and customisation. Unlike public AI, they ensure sensitive data stays protected while delivering more accurate, business-specific results.

4. Which industry gains the most from private LLMs?

Private large language models are most beneficial to industries such as healthcare, finance, legal, and IT. Due to rigorous data privacy regulations, regulatory constraints, and a demand for highly accurate, domain-specific AI solutions.